We’ve collected data on 12 languages – Kotlin, Scala, Clojure, Erlang, Swift, Elixir, Haskell, Rust, OCaml, Elm, PureScript, and Idris – from the functional programming family based on keywords, topics, hashtags, and @mentions across blogs, news sites, and social networks.

We analyzed 123084 mentions concerning these languages to find out which of the functional programming languages are loved by people, in other words, which have the most positive sentiment among all of the languages we analyzed and which have the most negative sentiment.

Specifically, we looked at the percent of positive sentiment, and negative sentiment. Using data from Brand24, we uncovered some very interesting findings, and now we want to share them with you. But first, for those who are curious about our methods and the Brand24 sentiment analysis, we prepared a short overview of the methodology.

Can’t wait to see the outcomes?

https://scalac.io/ebook/functional-programming-languages-sentiment-analysis/functional-programming-languages-ranking-2021/

Not a fan of reading online?

The sentiment analysis mechanism is based on the most popular achievements related to artificial intelligence – i.e., deep learning networks and embedding. In short, the texts that make up the mentions are converted into numerical sequences that are much more understandable to the algorithm than pure text strings. The second neural network was based on embeddings, which we learned to distinguish between sentiment based on several hundred thousand examples. The entire text in the found entry is analyzed.

For example – thanks to such advanced technology, the tool can distinguish between “thin footballer” and “thin TV” entries. In the first case (a footballer), negative sentiment will be determined, while in the case of a thin TV set, the sentiment will be positive.

Dictionary analysis. In their database, Brand24 stores several thousand words related to emotions – both positive and negative. Each word has an assigned weight. In the first stage, we divide the content into sentences and analyze each of them separately in terms of the presence of these words.

Linguistic analysis. Implementation of language rules and the context analysis mentioned above. It is well-known that the algorithm finding the word “good” should check if the word “day” has not appeared before or after it, etc. The algorithm currently contains a lot of additional parameters, such as the distance of positive or negative words from the search subject.

Only those results which the system has qualified according to these parameters are marked as positive or negative.

Most of the languages that we chose feel like natural choices. However, Kotlin, Rust and Swift might not seem obvious. They are not traditionally considered functional languages, but we chose them because to some extent they support this programming style. In addition, as the process of data extraction is complex and prone to errors, we were not able to include F# and Reason in our results, but we hope to change this in future editions and updates.

As we’ve already mentioned, this report is based o 123084 mentions. This is the number of mentions we consider “qualified” for analytical purposes based on the filters we applied to filter the unwanted content – we will explain this later on in this paragraph. 963941 mentions were aggregated by the Brand24 tool in the period starting on November 1, 2020, till January 31, 2021. As you can see, not all of them were included in the analysis.

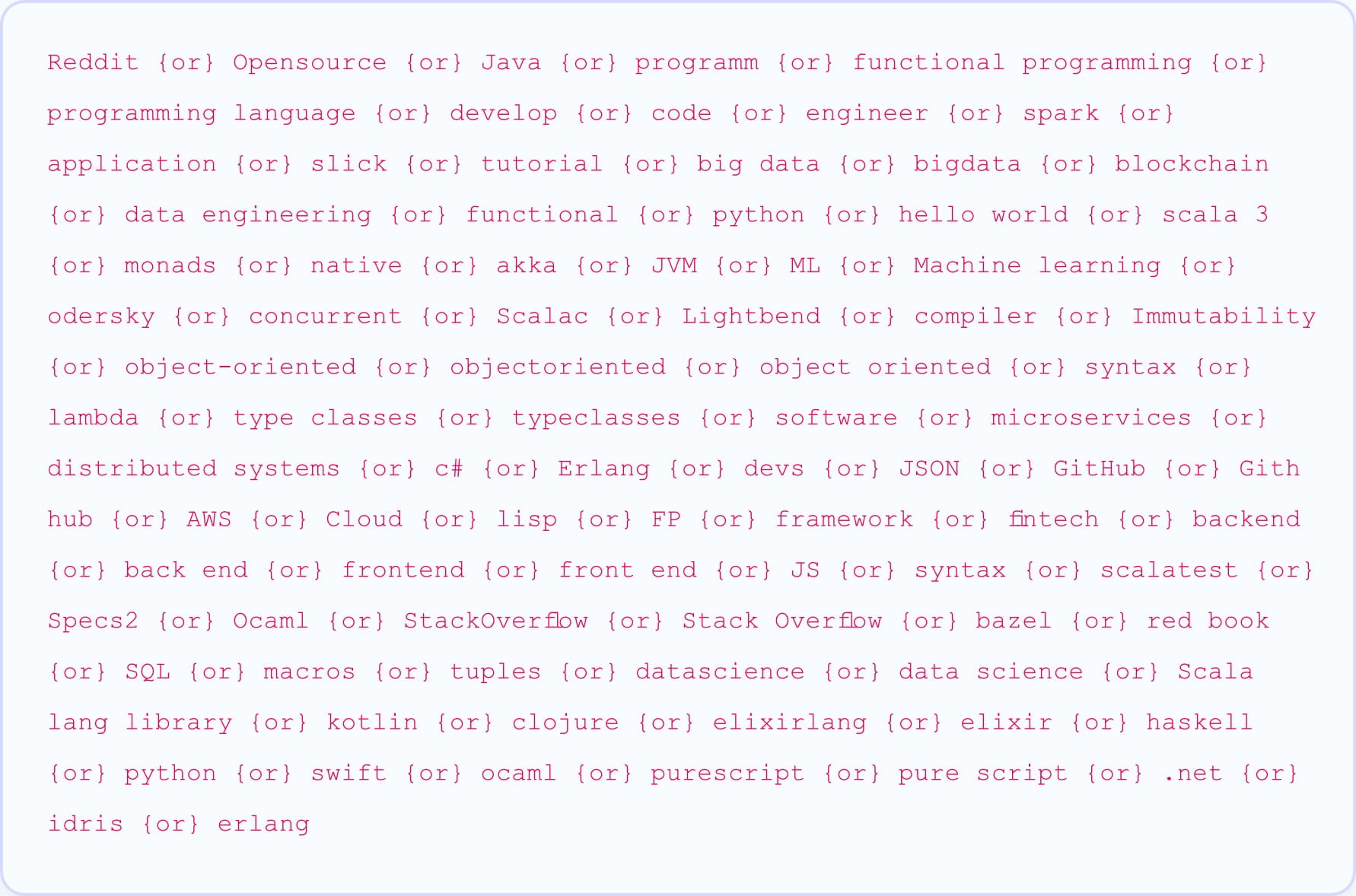

The platform by Brand24 is based on dictionary analysis, as mentioned above. Because of this, the gathered data included not only mentions of programming languages, but all the mentions that included the keywords in the project (each language was a separate project). The keywords usually included one or two forms (if applicable) of the programming language name. For example “Scala” was only one separate keyword, but “PureScript” appeared in two forms “PureScript” and “Pure Script”. On the keyword lvl the tool differentiates these two things.

Besides keywords, we also included “excluded keywords” where we knew straight away that the meaning of the keyword is broad. For Scala, the natural ones were for example “La Scala” and for Elm, it was “street”(A Nightmare on Elm Street) and “tree”.

As we started to analyze all of the mentions, we quickly realized the amount of noise in our data was too big to solve with exclusions, so we decided to apply custom filters that enabled us to analyze only the content related to the IT industry.

An example of a custom filter looks like this:

As you can probably tell, the filter lets you filter the data by telling the algorithm to search for mentions that not only have the keyword included, but also at least one of the terms defined in the filter. This enabled us to get to the real “meat”. Of course, this method is not 100% perfect, but it definitely gives a statistical overview of the way each language is represented on the internet.

This was our first time creating the ranking, but we would love to continue, so if you have any suggestions when it comes to the filters or our approach – let us know.