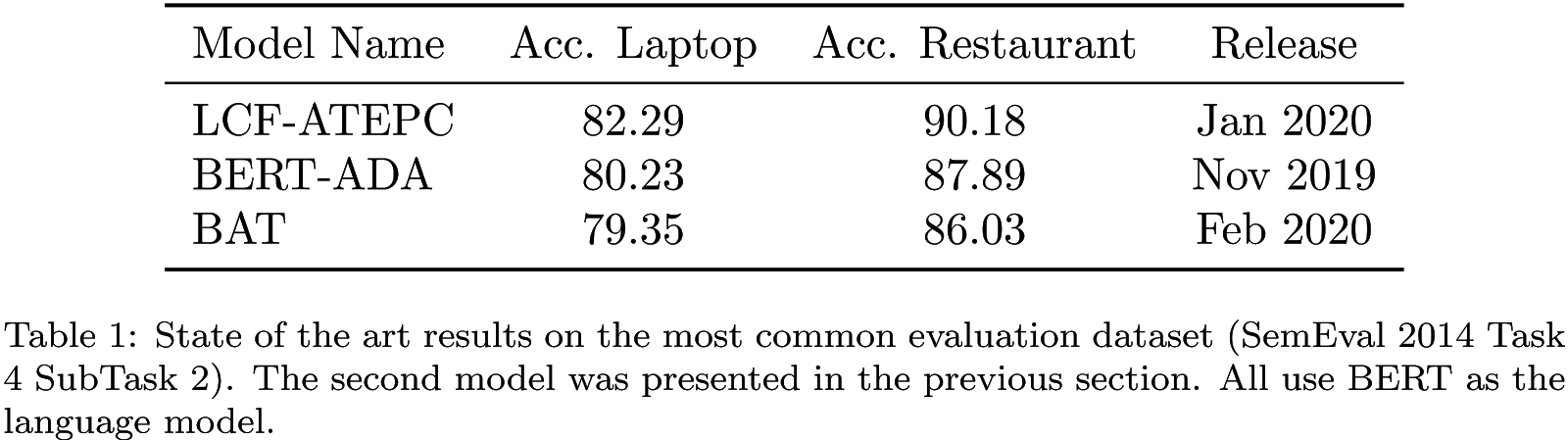

In the previous section, we presented an example of a modern NLP architecture wherein the core component is a language model. We abandoned task specific and time consuming feature engineering. Instead, thanks to language models, we can use powerful features, context-aware word embeddings. Nowadays, transferring knowledge from language models has become extremely popular. This approach dominates the leader boards of any NLP task, including aspect-based sentiment classification (see the table below). Nonetheless, we should interpret these results with caution.

A single metric might be misleading, especially when the evaluation dataset is modest (as in this case). According to the introduction, a model seeks any correlations it deems useful for making a correct prediction, regardless of whether they make sense to human beings or not. As a result, model reasoning and human reasoning are very different. A model encodes dataset specifics invisible to humans. In return for the high accuracy of massive modern models, we have little control over the model behavior – because both the model reasoning and the dataset characteristics which the model is trying to map precisely, are unclear. This is a serious problem because during an inference, unconsciously exposing a model to examples which are completely unusual is much more likely, and this can cause unpredictable model behavior. To avoid such dangerous situations and to better understand what is beyond the comprehension of a model, we need to construct additional tests; fine-grained evaluations.

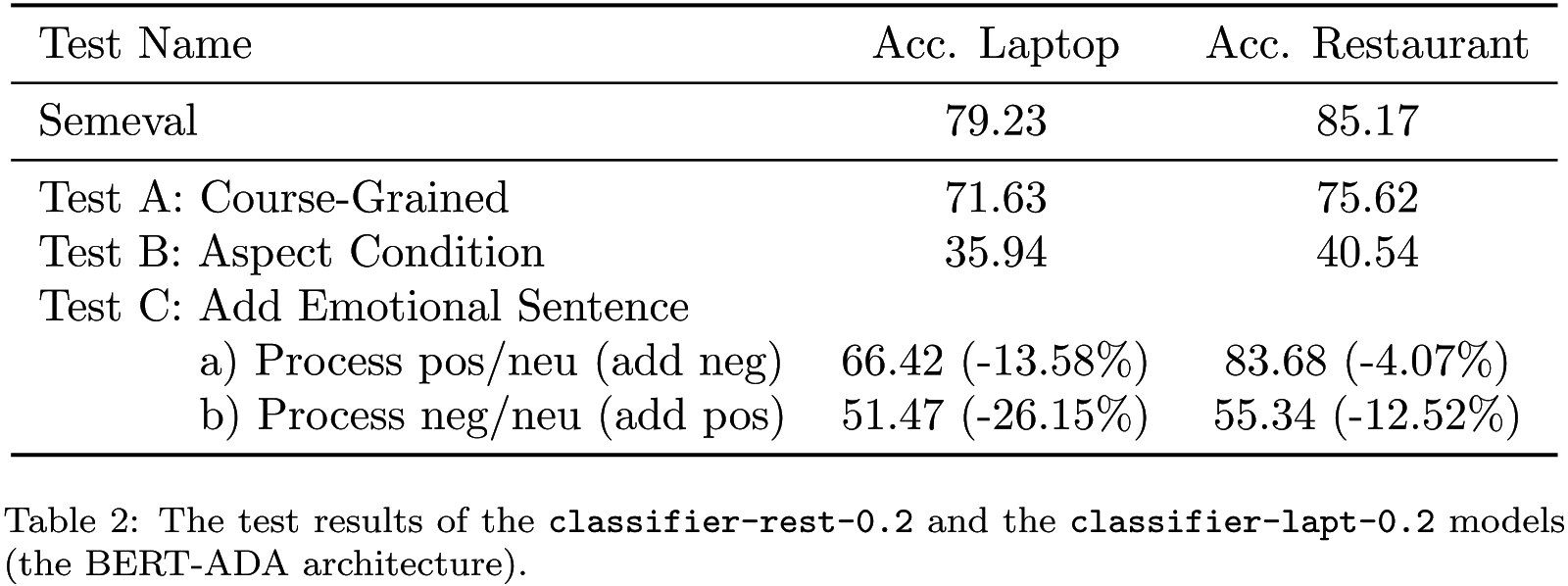

In the table below, we present three exemplary tests that roughly estimate model limitations. To be consistent, we examined the BERT-ADA model introduced in the previous section. Test A checks how crucial the information about an aspect is. We limit the model to predict sentiment without providing any aspects. The task then becomes a basic, aspect independent, sentiment classification (details are here). Test B verifies how a model considers an aspect. We force a model to predict using unrelated aspects (instead of the correct ones); manually selected and verified simple nouns that are not present in the dataset, even implicitly. We process positive and negative examples, expecting to get neutral sentiment predictions (details are here). Test C examines how precisely a model separates information about a requested aspect from other aspects. In the first case, we process positive and neutral examples wherein we add to texts a negative emotional sentence about a different aspect. For instance, “The {other aspect} is really bad.” expecting that the model will persist in making its predictions, without any changes. In the second case, we process negative and neutral examples with an additional positive sentence “The {other aspect} is really great.”. In both cases, we use verified unrelated aspects from test B (details are here).

Test A confirms that the coarse-grained classifier is achieving good results as well (without any adjustments). This is not good news. If a model predicts a sentiment correctly without taking into account an aspect roughly, there will be no gradient towards patterns that support aspect-based classification, and the model will have nothing to improve in this direction. Consequently, at least 70% of the already limited dataset will not help to improve the aspect-based sentiment classification. In addition, these examples might even be disruptive, because they could overwhelm examples which require multi-aspect consideration. Multi-aspect examples might become treated as outliers, and gently erased due to averaging of a gradient in a batch for example (the test summary is here).

Test B clearly demonstrates that a model may not truly solve aspect-based conditions, because it considers an aspect mainly as a feature, not as a constraint. In 35 to 40% of cases, a model recognizes correctly that the text does not concern a given aspect, and returns a neutral sentiment instead of positive or negative. The model’s accurateness in terms of aspect-based classification is questionable. One might conclude this directly from the model architecture because there is no dedicated mechanism that might impose the aspect-based condition (the test summary is here).

Test C shows that a model separates information about different aspects well. The reference is given in brackets to emphasize how enriched texts decrease model performance of processing a) positive/neutral and b) negative/neutral examples. In most cases, the model correctly deals with a basic multi-aspect problem, and recognizes that an added sentence, highly emotional in the opposite direction, does not concern a given aspect. Even if test B shows that the model is neglecting in some cases information about an unrelated aspect, this test reveals that an aspect is a really vital feature if a text concerns many aspects including the given aspect, and the model needs to separate out information about different aspects (the test summary is here).

Model behavior tests, as shown above, can provide many valuable insights. Unfortunately, papers describing state-of-the-art models quietly ignore any tests that may expose model limitations. In contrast, we encourage you to do those tests. Even though they might be time-consuming and tedious, test-driven model development is powerful because you can understand any model defects in detail, fix them, and make further improvements more smoothly.