There are many properties needed to keep an explanation consistent and reliable but one is fundamental. The explanation should clearly indicate the most important (in terms of decision-making) token at least. To confirm whether the proposed BasicPatternRecognizer provides patterns that support this property or not, we can do a simple test (implementation is here).

We mask in a text the most important (according to the patterns) token, and observe if the model changes the decision or not. The example might contain several key tokens, tokens that masked (independently) cause a change in the model’s prediction. The key assumption of this test is that the chosen token should belong to the group of key tokens if it is truly significant.

import aspect_based_sentiment_analysis as absa

patterns = ... # PredictedExmple.review.patterns

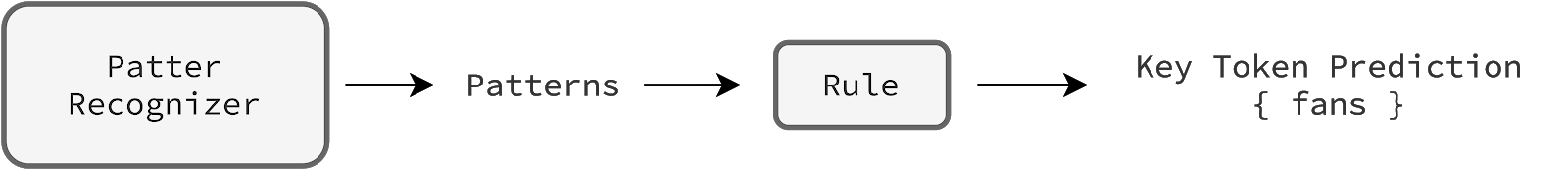

key_token_prediction = absa.aux_models.predict_key_set(patterns, n=1)It is important to be aware that the key token prediction comes from a pattern recognizer indirectly. We set up a plain rule predict_key_set (details are here) that sums the weighted (by importance values) patterns, and predicts a key token (in this case). Moreover, note that the test is simple and convenient because we are able to reveal the valid key tokens (checking only n combinations at most) needed to measure the test performance precisely.

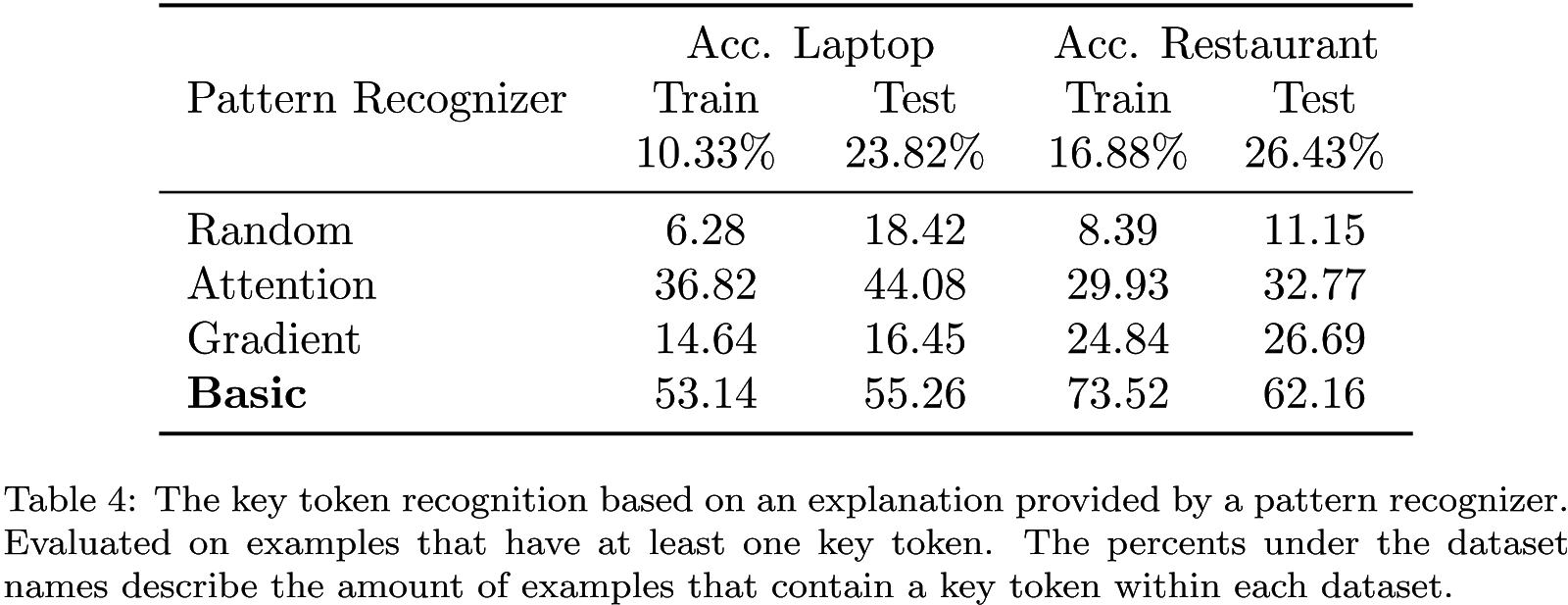

In the table above, we compare four pattern recognizers (details of other recognizers are here. For example, around 24% of the laptop test examples have at least one key token (others we filter out). Of those, around 55% of cases, the chosen token based on an explanation from the basic pattern recognizer is the key token. From this perspective, the basic pattern recognizer is more precise than other methods (more test results are here). It’s interesting that test datasets have significantly more examples that contain a key token. It suggests that model reasoning is different during processing known and unknown examples. In the next sections, we further analyse pattern recognizers solely on more reliable test datasets.