There is a second, more important reason why we have introduced the professor. The professor’s main role is to explain model reasoning, something which is extremely hard. We are far from explaining model behavior precisely even though it is crucial for building intuition to fuel further research and development. In addition, model transparency enables an understanding of model failures from various perspectives, such as safety (e.g. adversarial attacks), fairness (e.g. model biases), reliability (e.g. spurious correlations), and more.

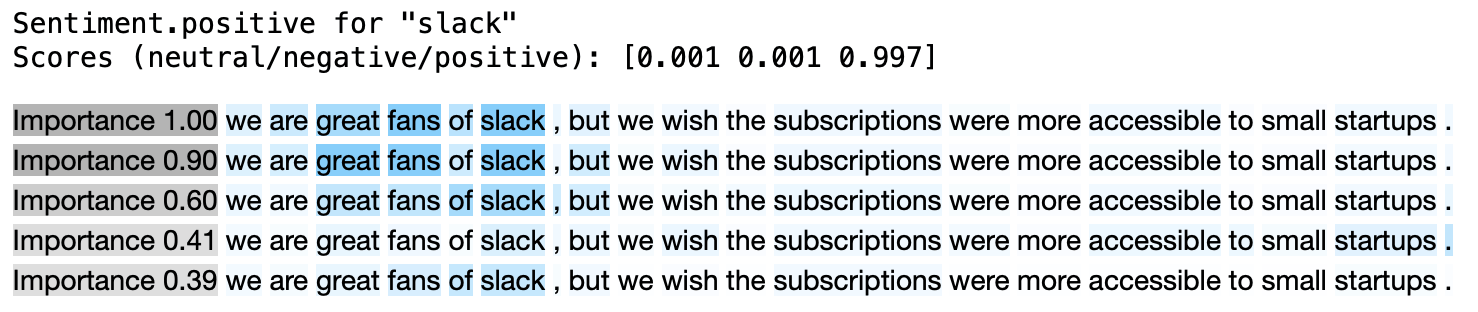

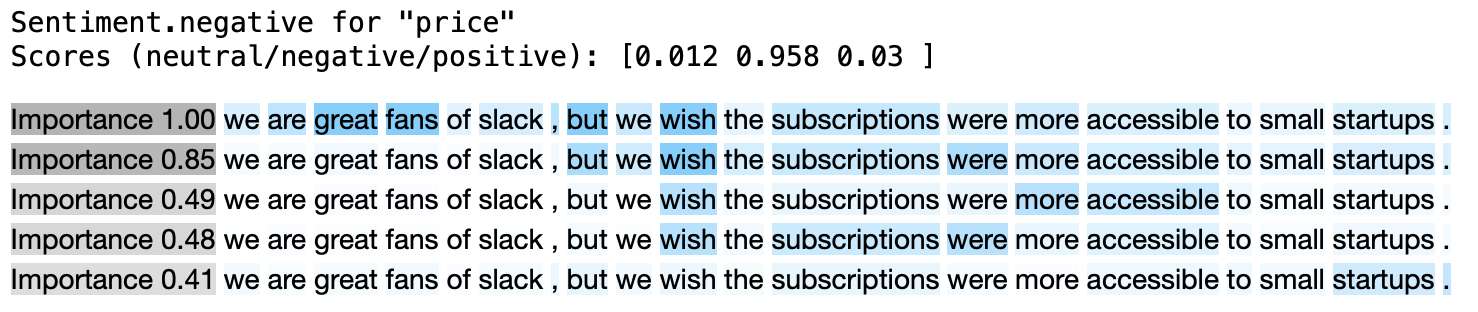

Explaining model decisions is challenging, but not only due to model complexity. As we mentioned before, models make decisions in a completely different way to people. We need to translate abstract model reasoning into a form understandable to humans. To do so, we have to break down model reasoning into components that we can understand. These are called patterns. A pattern is interpretable and has an importance attribute (within the range <0,1>) that expresses how a particular pattern contributes to the model prediction.

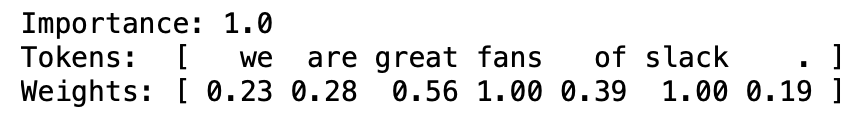

A single pattern is a weighted composition of tokens. It is a vector that assigns a weight for each token (within the range <0,1>) that defines how a token relates to a pattern.For instance, a one-hot vector illustrates a simple pattern that is exclusively composed of a single token. This interface, the pattern definition, enables either simple or more complex relations to be conveyed. It can capture key tokens (one-hot vectors), key token pairs or more tangled structures. The more complex the interface (the pattern structure), the more details can be encoded. In the future, the interface of model explanation might be in the form of natural language itself. It would be great, for example, to read an explanation of a decision in the form of an essay.

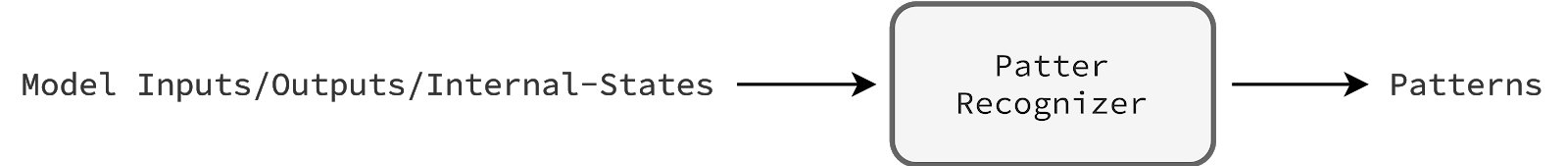

The key concept is to frame the problem of explaining a model decision as an independent task wherein an aux. model, the pattern_recognizer, predicts patterns given model inputs, outputs, and internal states. This is a flexible definition, so we will be able to test various recognizers in the longer perspective. We can try to build a model-agnostic pattern recognizer (independent with respect to the model architecture or parameters). We can customize inputs, for instance, take into account internal states or not, analyze a model holistically or derive conclusions from a specific component only Finally, we can customize outputs, defining sufficient pattern complexity. Note that it is challenging to design training and evaluation, because the true patterns are unknown. As a result, extracting complex patterns correctly is extremely hard. Nonetheless, there are a few successful methods to train a pattern recognizer that can reveal latent rationales. This is the case in which a pattern recognizer tries to mask-out as many input tokens as possible, constrained to keeping an original prediction (e.g. the DiffMask method).

Due to time constraints, at first we did not want to research and build a trainable pattern recognizer. Instead, we decided to start with a pattern recognizer that originates from our observations, prior knowledge. The model, the aspect-based sentiment classifier, is based on the transformer architecture wherein self-attention layers hold the most parameters. Therefore, one might conclude that understanding self-attention layers is a good proxy to understanding a model as a whole. Accordingly, there are many articles [1, 2, 3, 4, 5] that show how to explain a model decision in simple terms, using attention values (internal states of self-attention layers) straightforwardly. Inspired by these articles, we have also analyzed attention values (processing training examples) to search for any meaningful insights. This exploratory study has led us to create the BasicPatternRecognizer (details are here).

import aspect_based_sentiment_analysis as absa

# This pattern recognizer doesn't have trainable parameters

# so we can initialize it directly, without setting weights.

recognizer = absa.aux_models.BasicPatternRecognizer()

professor = absa.Professor(pattern_recognizer=recognizer)

# Examine the model decision.

nlp = absa.Pipeline(..., professor=professor) # Set up the pipeline.

competed_task = nlp(text=..., aspects=['slack', 'price'])

slack, price = completed_task.examples

absa.display(slack.review) # It plots inside a notebook straightaway.

absa.display(price.review)