Natural Language Processing is in the spotlight at the moment. This is mainly due to the stunning capabilities of modern language models. They can transform text into meaningful vectors that approximate human understanding of text. As a result, computer programs can now analyze texts (documents, mails, tweets etc.) roughly the same as people do. The extreme power of modern language models has fueled the NLP community to build many other, more specialized models that aim to solve specific problems, for example, sentiment classification. The community has built up open-source projects that provide easy access to countless finely-tuned models. Unfortunately, the usefulness of the majority of these is questionable when it comes to real-world applications.

ML models are powerful because they approximate a desired transformation, mapping between inputs and outputs, directly from the data. By design, the model collects any correlations in the data that are useful for making the correct mapping. This simple restriction-free rule forms the complex model reasoning that enables the approximation of any logic behind the transformation. This is the big advantage of ML models, especially in cases in which the transformation is intricate and vague. However, this restriction-free process of forming model reasoning can also be a major headache because it can be problematic when it comes to keeping control of the model.

It is hard to force any model to capture a general problem (e.g. sentiment classification) solely by asking it to solve a specific task, a common evaluation task (e.g. SST-2) or a custom-built task based on labeled (sampled from production) data. This is because the model discovers any useful correlations, no matter whether they describe a general problem well and make sense to human beings or whether they are just exclusively effective at solving a specific task. Unfortunately, this second group of adverse correlations that encode dataset specifics can cause unpredictable model behavior on data that may be even just slightly different than that used in a training. Due to this unfortunate nature of ML models, it is important to test how a model behavior is consistent with expected behavior. This is crucial because a model working in a real-world application is exposed to process data that is changing in time.

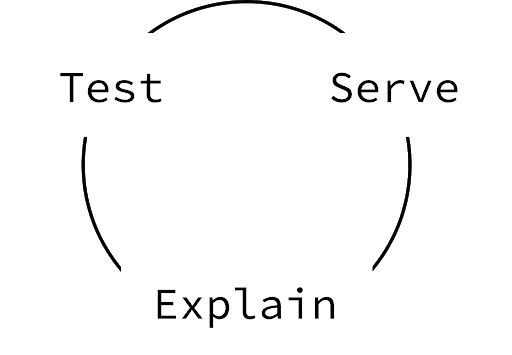

Before serving a model, the bottom line is to form valuable tests that confirm whether the model reasoning has satisfied our expectations or not. As a result, a model that meets test conditions is stable in test boundaries at least. Note that what is beyond the test boundaries is unknown. Unfortunately, as long as neural networks form reasoning without restrictions, we cannot be sure that the model will behave according to our expectations in every case (even if it satisfies all of the tests). Nonetheless, a risk of unexpected behavior can and should be minimized.

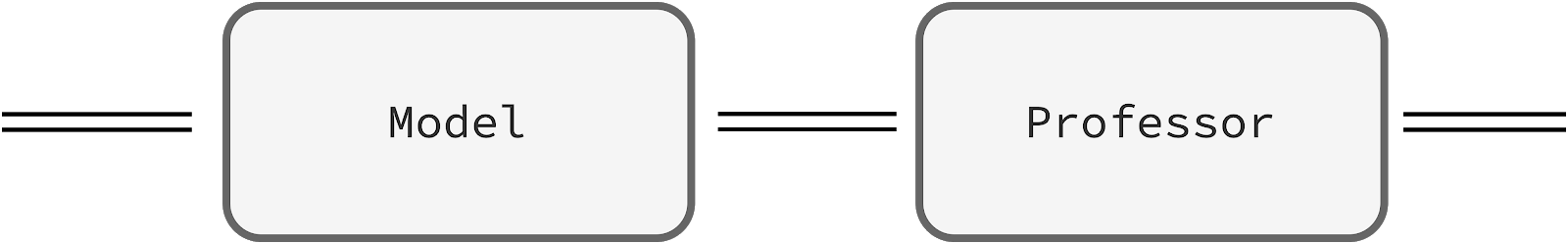

The tests assure us that the model is working properly at the time of the deployment. Commonly, performance starts to decline in time so it’s vital to monitor the model once it’s served. It is hard to track unexpected model behaviors having only a prediction, therefore, the proposed pipeline is enriched by an additional component called the professor. The professor reviews model internal states, supervises the model, and provides explanations of model predictions that help to reveal suspicious behaviors. The explanations not only give us more control over the served model but also enhance further development. The analysis of explanations can lead to the formation of newer more demanding tests that force a model to improve.

We believe that both testing and explaining model behaviors are important in building production-ready stable ML models. This is especially important in cases wherein a huge model is fine-tuned on a modest dataset because, due to the nature of ML models, we can have problems defining the desired task precisely. This is a fundamental problem among many down-stream NLP tasks. In this article, we focus on aspect-based sentiment classification.