We re-wrote the process of data retrieval, processing and distribution (ETL) in a streaming manner. This allowed us to reduce the costs, as we no longer needed a Spark cluster, with only a single machine required to handle the same or larger amount of data in a resource-safe manner.

Such approach was possible thanks to the reliable implementation of Reactive Manifesto in Akka Streams, meaning we introduced a solution that was:

1. responsive – the solution provides a response no matter the process output

2. resilient – the solution remains responsive after a failure, which is ensured by isolating its components

3. flexible – the solution remains responsive no matter the size of the input workload

4. message-driven – Akka Streams handles message-driven architecture out of the box allowing us to maintain the process using convenient high-level API

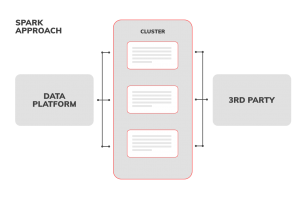

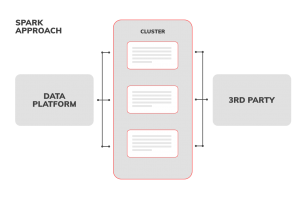

Spark approach

The Data Platform provides the data to the Spark cluster manager. Then, the data are spread across cluster nodes, so each node processes a subset of the whole dataset. After finished data processing tasks are finally send to the 3rd party to run.

Even though the processing time is not a critical factor in our case, given approach worked well only at the beginning with the initial 3rd party channel.

Soon it became clear there are more drawbacks than advantages:

• higher costs due to the need for spawning the cluster

• the data platform can produce very different amounts of data for each channel, from a few MB to tens of GB

• high memory consumption, but small CPU utilization

• the number of nodes in the cluster was fixed no matter what the data size is

• each node had to fit a subset of data into memory, sometimes just a few hundreds of MB, sometimes few GB

• it quickly becomes clear that some 3rd party channels can take data in parallel using HTTP calls, but some of them require to combine the whole data set into a single processed file. The cluster of nodes made it difficult to gather data back together effectively, combine and compress the data.

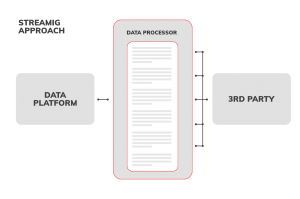

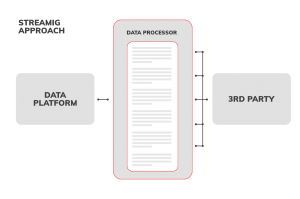

Streaming approach

The Data Platform provides the data in a streaming manner, never exceeding the resources of the VM. Then, the data are processed by a single Data Processor, also in a resource safe, streaming way. Now it’s up to the implementation of the data processor to either push processed data to 3rd party in parallel (e.g., many parallel HTTP calls) or build a single file which later on is stored on GCS or S3 instance.

• lower costs, less VMs needed • constant, safe memory consumption

• possibility to use multiple cores to process the data

• can handle infinite amounts of data

• 3rd party flexible – from a single to multi-parallel data file ingestion

• the stream can be parallelized during various stages of the process allowing us to use the VM power to the max

• whole process execution time is either the same or faster compared to the previous approach