Functional Programming vs OOP

As a young, bright-eyed, bushy-tailed engineer starting my career at NASA in the 90s, I was fortunate enough to develop engineering-oriented software that modeled the different parts of the International Space Station. The million-line codebase was based on objects. Almost every part of the space station was represented as an object, from the overall segments to the four 100-Kg concrete wheels that go 6,600 RPM in order to control the orientation of the station. Needless to say, this was awe-inspiring and ended up marking the beginning of the rest of my career. The way I saw and developed software was now guided by this thing called object-oriented programming (OOP). It has served me well and was a much-welcome departure from procedural programming.

17 years later, I would find myself in a similarly-fortunate situation when my colleague inspired me to look in earnest at Scala. This led me to functional programming (FP) and I haven’t looked back since.

So which is better? Which one should I use? Not so fast. The first thing to note is that Functional Programming and Object-Oriented Programming are not necessarily mutually exclusive (yes, I know stating this so early on is anticlimactic but I am hoping it decreases any likelihood of misinterpretation). Secondly, OOP and FP can actually hold a quite-symbiotic relationship (more on this later). But first, let’s start with what OOP and FP are, and then we’ll do a comparison of Functional Programming vs OOP.

Overview of Object-Oriented Programming

OOP is a style of programming that allows us to organize our code in objects (or entities) that can contain and manage their own state and have defined behaviors which may in turn manipulate this state. OOP has 4 basic defining characteristics:

- Encapsulation

- Abstraction

- Inheritance

- Polymorphism

Encapsulation: hiding of data implementation. The state of the object is private and can only be accessed/manipulated in a way deemed safe through public interfaces (methods). The aim of this principle is to protect the object’s integrity by preventing clients to these objects from setting the internals to an invalid/inconsistent state. Theoretically, this reduces complexity and increases the robustness of a software system by limiting the interdependencies of its components. In Scala, this is accomplished through access modifiers placed in front of the state and behaviors. For example:

private val state = new Statefor hiding state and, public, in the case of behavior,

def behavior(s: AnyVal): Unit = state.internalize(s)for open access (in Scala, the absence of an access modifier indicates open access).

Abstraction: similar to (but not to be confused with) encapsulation, abstraction is the hiding of implementation details or reducing the exposure of behavior to what is necessary. The purpose differs from encapsulation in that it is more about reducing human-cognitive complexity and not necessarily preventing invalid/inconsistent states (as in encapsulation). This allows us to reason about the software in a more-manageable way and, in turn, reduce software development and maintenance costs. For example, in a geometry application, the client code may be interested in the perimeter of some shape; the actual calculation of the different types of shapes may not be that important. In Object-Oriented Programming languages, abstraction is accomplished through interfaces and abstract classes. Our example above can defined as so:

trait Shape:

def perimeter: Double

class Triangle(ln1: Double, ln2: Double, ln3: Double) extends Shape:

override def perimeter: Double = ln1 + ln2 + ln3

class GeometryClient(shape: Shape) extends App:

println("shape perimeter: " + shape.perimeter)The complexity-reducing abstraction here is the trait (Scala for interface) Shape (in particular, its unimplemented method, perimeter). The geometry app, GeometryClient, is not really interested in how the perimeter is calculated. It is only interested in the fact that it needs a perimeter to print out. Therefore, it is able to accept the Shape abstraction and calculate its perimeter without knowing how it is calculated. The calculation is actually done in the concrete class Triangle which implements the interface behaviour, perimeter. But, this implementation detail is hidden from the client in order to leverage the mentioned benefits contained in the abstraction design principle.

Inheritance: a way to leverage pre-existing functionality in a different class. Conceptually, a class can have another class or be another class thereby inheriting its accessible functionality. This allows for code-reuse and the ability to modify functionality without actually changing the original code that has presumably already been tested.

As alluded to above, there are two ways to provide inheritance; they are commonly referred to as “is-a” and “has-a” (technically, “has-a” is not inheritance but composition; it is very similar in that it enables OO-style code reuse). In Scala “is-a” is accomplished with the extends and with keywords. For example,

class Parent:

def hello(): Unit = println(s"Hello, I am $whoAmI!")

def whoAmI: String = "the parent"

trait Talk:

def talk(): Unit = println("I like to talk")

class Child extends Parent with Talk:

def apply(): Unit =

hello()

talk()

override def whoAmI: String = "the child"Here the Parent defines what hello() does and the Child does not need to redefine because it serves its purposes quite well. However, whoAmI does not really fit the bill and therefore must be overridden. Notice that, although the Child was able to leverage pre-existing code (code-reuse), it is also able to define custom behavior without ever changing the original code (which in some cases, it may not even be able to) in Parent. Also, a Child is able to talk because it “mixes-in” Talk.

“Has-a”, on the other hand, is simply accomplished through delegation. For example, a gas car will run only because it has an engine:

class Engine:

def run(): Unit = println("vroom!")

class Car:

private val engine = Engine

def run(): Unit = engine.run()Here, again, we see code reuse but, this time, when a car wants to run, it delegates to its private engine which in turn does the actual running.

Polymorphism: (composed of “poly” meaning many + “morphism” meaning change). This is the ability to perform a behavior in different ways depending on the underlying implementation which may or may not be known a priori. This is very powerful as it reduces coupling (no need to code to the particular implementation) as well asincreasing reusability and readability. The operative ingredient to make polymorphism is the interface concept. This enables us to define a contract with the potential client delineating what the behavior will be in terms of input and output types and general nomenclature. Let’s suppose that we want to compute perimeters of different types of shapes but without really knowing which kind at the onset. We can create a contract of computing perimeters for all of the shapes without actually implementing how to do it therein. The implementation is done in the individual fulfillments of the contract, in other words, the concrete classes or specific types of shapes:

trait Shape:

def perimeter: Double

class Triangle(ln1: Double, ln2: Double, ln3: Double) extends Shape:

override def perimeter: Double = ln1 + ln2 + ln3

class Circle(r: Double) extends Shape:

override def perimeter: Double = 2 * Pi * r

class GeometryClient(shapes: List[Shape]) extends App:

println("total perimeters: " + shapes.map(_.perimeter).sum)The GeometryClient does not need to know what type of shape it is in order to have its perimeter computed because it relies on the contract which stipulates that a perimeter will be computed. This contract is the Shape’s perimeter method. The concrete Triangle and Circle classes, in turn, by extending Shape, are (contractually) obligated to determine and implement how a perimeter is actually calculated for their particular kinds of shapes.

Overview of Functional Programming

Programming largely relies on creating instructions that the computer in turn executes. This is known as imperative programming. In FP, on the other hand, programming is accomplished by creating functions and composing them with each other. Functions here are conceptually similar to mathematical functions where values are provided and resultant value(s) are expected after the function does its thing. In imperative programming, the mutability of values, control structures, and side effects are how things get done. For example;

var i = 0

while (i < 10) { // control structure

if (isOdd(i)) { // control structure

println(i + " is odd") // side effect

}

i = i + 1 // mutability

}FP eschews all these and instead relies on functions. How?! And, why?!

Let’s start with the “why” (as in, “why would you ever want to do this?”). More and more, our computing systems rely on multiple computing cores and/or multiple computers to tackle today’s calculation challenges. This is because;

- our appetite for large number crunching has grown with big data analysis ROI realizations

- computer manufacturers have also realized the cost-effectiveness of adding more cores as opposed to increasing the performance of single cores

- the cloud, with its multi-node offerings, has become so accessible

In other words, more money can be made and it is now cheaper to make that money. The result is that individual cores are not that much faster but now there are multiples of them as opposed to just one. In order to exploit this shift in the computing landscape, programs must do their calculations in parallel amongst the resources available. However, this presents a wrinkle in the way we have normally done programming… Now we must ensure that the integrity of our state remains unimpacted. Let’s demonstrate,

var x = 0

x = x + 1What is the value of x at the end of this snippet? 1? Yes, but what if there are multiple threads (in order to leverage multiple computing resources)? Can the answer be 0? Actually, in this case, we really don’t know because of;

- Mutability

- Parallelism

When these two are combined, the result is nondeterminism. So, the things that we’ve grown to love and cherish in computer programming, our bread and butter if you will, are not really safe when attempting to leverage these multiple computing resources. To be fair, there have been some mitigation constructs, such as semaphores, that have valiantly fought against this observation, but as anyone who has survived programming for Wall Street trading systems can attest, it is less than perfect – increasing logical complexities are a breeding ground for the ever-insidious heisenbugs.

The corruption or nondeterminism we have just seen was due to the reassignment of a new value to x, in other words mutability. In order to avoid these troubles, FP is immutable. That would mean that x = x + 1 is no longer accepted. No matter how many threads are running concurrently through this snippet, if there is no mutability, the runtime is never afforded an opportunity to corrupt the value of x because x after initial assignment will always be 0 and never anything else.

Another characteristic of pure functions is referential transparency. A function is referentially transparent if it, along with an instance of its input, can be replaced with its evaluation (i.e., the resulting output) with zero effect on its context. For example, given a function, f, is x => x + 1, when x is 2, f(2) can be replaced with the value 3 with no impact to the whole program. For this to be true, 2 + 1 must always equal 3. If the equation is not able to make this guarantee, it is not referentially transparent. FP functions are referentially transparent. This allows for extreme modularization which in turn enables composability. If we recall, FP is able to do away with control structures (e.g., if-then-else statements, while and for loops) through function composability. Referential transparency also allows us to reason about functions locally without having to clutter up the thoughtspace with contextual dependencies.To summarize, why would one restrict programming to a subset of the language features that do not exhibit mutability, side effects, or referential opaqueness? Because these things make the efficient use of today’s computing resources, if not impossible, manageable .

Now how does FP do it? Let’s take a look at a variation of the earlier example where a list of odds and a list of evens is being created:

var i = 0

val evens = ArrayBuffer.empty[Int]

val odds = ArrayBuffer.empty[Int]

while (i < 10000) { // control structure

if (isEven(i)) { // control structure

odds.addOne(i) // mutability

} else {

evens.addOne(i) // mutability

}

i = i + 1 // mutability

}

(evens, odds)Here we are telling the system how to create a list of evens and a list of odds by the usual trappings of imperative devices. We first create empty corresponding lists followed by creating a looping structure with a nested if-then-else control structure that will correctly append the empty lists predicated on whether the value is considered even or odd. And then we increment the value for the next iteration. Our code resembles a bit of an assembly line where we tell the system each step required to create what we desire. This, in a nutshell, is imperative programming.

We note that these imperative trappings are quite essential to what we are trying to achieve. Let’s see what this would look like in Scala if we did away with all these and instead tried to use functions and function compositions:

(0 until 10000).partition(isEven)0 until 10 is a function that takes a beginning and an exclusive end and, in turn, returns a corresponding sequence. This function is further qualified by the composition with the next method, partition which in turn accepts another function as a predicate argument. Hold up. A function is passed to another function as an argument?! Yes, this is another example of function composition. A function that accepts other functions as arguments is called a higher-order function or HOF. This one in particular, is a first-degree HOF because the function that is passed as an argument does not itself accept another function as its own argument. But, if it did it would be called a second-degree (or higher) HOF. Wow, so reverting back from our tangential note, we have successfully leveraged FP to significantly reduce the verbosity of its imperative counterpart and take readability to the levels of folklore. We are not telling the system how to create these lists of evens and odds, we are simply telling it to do it.

This is all good and well but is it necessary? No… unless you want to leverage today’s multi-resource computing systems. Let’s parallelize the imperative code. Let’s add a semaphore or two and make sure to test it for any race conditions and pray that our tests are sufficient. And remember not to be overzealous in safeguarding the integrity of the state so that we don’t impact performance too much. I’ll wait. Actually, let’s not spend any more time on this. Let’s just try and parallelize on the FP code:

(0 until 10000).par.partition(isOdd)We have just leveraged the composability of FP adding the parallelization method, par, and, voila, we’ve got parallelization!

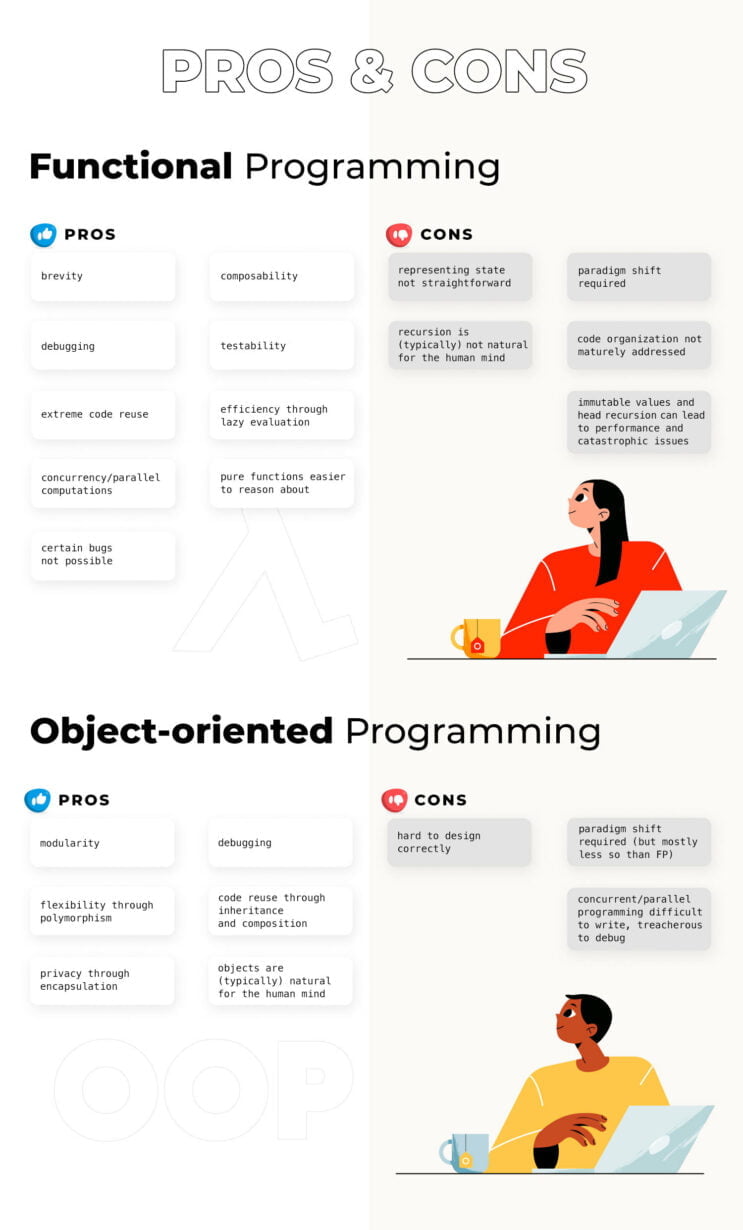

Functional Programming vs OOP; Pros and Cons of each

Now that we have a clearer picture of what FP and OOP is, let’s compare and contrast both by first outlining their pros and cons:

FP Pros

- Brevity – in FP, we tell the computer to do something and not necessarily how to do it. This leads to less code than its imperative counterpart. To reuse the previous example, this piece of imperative code

var i = 0

val evens = ArrayBuffer.empty[Int]

val odds = ArrayBuffer.empty[Int]

while (i < 10000) {

if (isEven(i)) {

odds.addOne(i)

} else {

evens.addOne(i)

}

i = i + 1

}

(evens, odds)can be reduced to this functional equivalent:

(0 until 10000).partition(isEven)This is brevity. And, FP has it. BTW, which one is more readable? FP believes that

brevity leads to improved readability. This example certainly does not disprove that.

- Composability – a topic all unto itself, this describes the ability to compose a set of functions to form a new functionality. This is incredibly powerful because it allows us to solve a large and/or complex problem with a small set of well-thought-out API. For example, workdays can be described by simply composing the days and time methods of a Schedule API (since they all would be of return of type Schedule):

Schedule.days(M to F).time(9 to 5)- Debugging – since pure functions do not change their output based on the change of state (in FP, if state is present, it is immutable), debugging complexity is significantly reduced – the internal values, if errant, will be easier to detect because there are no mysterious external forces at play.

- Testability – since FP enables local reasoning as a byproduct of its referential integrity, one can easily separate the function from its environment/context and test it.

- Extreme code reuse – since pure functions are modular (they don’t concern themselves with how to obtain the input or what to do with the result), they are extremely reusable. Compare

def increment: Unit =

println("Enter the integer to be incremented\n: ")

var x = readln()

x = x + 1

println("The incremented value is: " + x)

}to

def plusOne(x: Int): Int = x + 1Which one is more reusable?

- Efficiency through lazy evaluation – does not eagerly execute an expression but waits until it is actually needed in order to execute. This allows for some computational optimizations. Let’s do a simple demonstration:

xs.map { x =>

(x, computationallyIntensive(x))

}.filter(isEven(_._1)A computationally-intensive calculation is performed on all xs before we filter out the xs that are odd. Since this is an eager (or strict) evaluation, one would expect a lot of computational cycles would be wasted due to the unfortunate order of the composition. Of course, this particular one is an easy scenario to avoid, but more complicated ones may not be so easy. However, if xs were instead lazy:

xs.view.map { x =>

(x, computationallyIntensive(x))

}.filter(isEven(_._1)only the even xs would be candidates for the computation-intensive calculation since at the end of the day they are the only ones necessary, thereby optimizing use of the CPU.

- Concurrency/parallel computations – as elaborated in an earlier section, due to the restrictions imposed on FP (no mutability, side-effects, or control structures), concurrency and parallel computations are much more accessible.

- Pure functions easier to reason about – one does not need to mentally keep track of state changes to understand a block of code.

Functional Programming vs OOP: FP Cons

- The real world is impure – or stated a different way, the real world is full of side effects. Side effects are the ways that a computer program interacts with the outside world. For example,

- printing a document

- logging a debug statement

- inserting a new record to a database table

It doesn’t take much imagination to see how any of these can be a requirement for a particular computer program. However, there are various ways that FP is able to mitigate these requirements and their apparent position against FP principles. Effect systems or functional effects are beyond the scope of this article but, in essence, the effects themselves are treated as values and their execution is delayed as reasonably as possible so as to enjoy much of the benefits of FP (e.g., compositionality, referential transparency).

- Paradigm shift required – FP uses expressions instead of statements. An expression is evaluated to produce a value while a statement is executed to assign variables. For those of us who come from the more common schools of programming (where we are taught to program by typing instructions for the computer to execute), the FP paradigm may be a bit difficult to adopt since it may not seem natural. Whether this is a function of educational background or human-cognitive leanings or some combination of both is up for debate.

- Representing state changes not straightforward – since the state is immutable in FP, one cannot really change the state and a new state must be created to pass on, resulting in a state-“changing” method.

- Code organization not maturely addressed – OOP is the king of code organization because of encapsulation, abstraction, and, perhaps, even inheritance. In FP, functions are the first-class citizens and even though some pure FP languages have module-like constructs to group like-minded functions together, their organizational power pales in comparison to the ones afforded by OOP-language constructs such as classes and objects.

- Recursion is (typically) not natural for the human mind – A loop is a prominent and helpful control structure in imperative programming. However, in FP, control structures are not allowed. So, how is FP able to iterate through lists/enumerations? Recursion. Recursion is typically not easy to wrap the mind around. But sometimes, it comes quite naturally. To offer one such example, let’s look at the Fibonacci series: every number in the series is the sum of the 2 previous ones (if they exist). So,

where

and ns are natural numbers. This relationship is recursive and therefore is quite easily transferable to FP code:

def fib(n: Int): Int =

if n == 0 or n == 1 then n

else fib(n - 2) + fib(n - 1)Even in this exemplary case, without sufficient exposure, a subset of imperative programmers may not find this to be that straightforward.

- Immutable values and head recursion can lead to performance and catastrophic issues – head recursion (recursion where the last expression to be evaluated is not a recursion call (as in the Fibonacci example above where the last expression is the sum of two fib calls and not the fib calls themselves)) requires stack memory which causes latency in overall results. Furthermore, if a head-recursive piece of code consumes more stack memory than that allotted, a catastrophic stackoverflow error will pay a visit. FP can mitigate these risks by resorting to tail recursion instead. Tail recursion avoids creating a new stack frame by executing the call in the current stack frame. One would only need to organize the code so that the recursive call is the actual last expression of the code block to be evaluated. Let’s take a staple FP function for example:

def map[A, B](xs: Seq[A])(f: A => B): Seq[B] =

def loop(xs: Seq[A], acc: Seq[B]): Seq[B] =

if xs.isEmpty then acc

else loop(xs.tail, acc :+ f(xs.head))

loop(xs, Seq())Here, every element of xs is transformed (or mapped) to a new value according to the mapping function f. This, of course, is a little bit more verbose but it is stack-safe because it is able to avoid creating new stack frames because the recursive call to loop() is always the last.

OOP Pros

- Modularity – the process of decomposing a problem into a set of modules to reduce its overall complexity. Abstraction, the OOP principle, really helps in modularization. By organizing a problem into different abstractions, one is not only able to reduce complexity but also to increase modularity.

- Debugging – since well-designed classes usually contain all the information applicable to them (cohesion), the debugging area is reduced to a more manageable size (ideally, avoiding the dreaded “butterfly effect”).

- Flexibility through polymorphism – as expanded on earlier in the section on polymorphism, polymorphism allows the code to be designed in such a way that its implementation can vary depending on the particular need.

- Code reuse through inheritance and composition – in OOP, inheritance and composition are the operative principles that enable code reuse. The code is either written in a “parent” (inheritance) or a “part” (composition) and then leveraged (or called upon) in the “child” or “whole”, respectively.

- Objects are (typically) natural for the human mind – the material world we are born into is made up of innumerable objects – it is our natural world and the world that our mind has been trained on since our inception. OOP, which is made up of objects, has an adoption advantage because of this trained bias in all of us.

Functional Programming vs OOP: OOP Cons

- Paradigm shift required – OOP comes with a whole new vocabulary and concepts such as classes, constructor invocation, overloading, overriding, and others. These have historically presented a challenge in comprehending and mastering Object-Oriented Programming. To be fair, these cognitive hurdles are not as drastic as the ones commonly encountered in mastering Fuctional Programming.

- Hard to design correctly – logically following the paradigm-shift requirement already mentioned, OOP requires the ability to organize the solution to a problem in classes, bound by certain design constraints (which haven’t been mentioned in this article). The design decisions around this are almost endless, with many of them being suboptimal. Mastery of OO design is not a trivial matter

- Concurrent/parallel programming is difficult to write, treacherous to debug – as expanded on in the FP section, concurrent/parallel programming is particularly difficult without the aid of some of the FP principles such as immutability and referential transparency. So while this is not a problem with OOP in particular, it is a problem with imperative programming in general. In the next section, we will see how OOP needs not be imperative programming and how FP and OOP can work together to alleviate these challenges.

FP and OOP (not vs.)

Only siths deal in absolutes – Obi-Wan Kenobi

As mentioned at the beginning, and in spite of all the differing pros and cons mentioned before, FP is not the antithesis of OOP. FP and imperative programming by and large are. The confusion may arise from the fact that most OOP code out in the industry is imperative but so is most code in general. OOP does not necessarily need to be imperative, however. If the language allows for FP and OOP, then it is possible to reap the benefits of FP while also reaping those from OOP. Let’s state the main benefit of OOP: modularization. OOP enables the organisation of code into modules which allows us to reason about the code in a way that is easier to reason about for the human brain. Can we use encapsulation, abstractions, inheritance, and polymorphism in FP code? The answer is a conditional “yes”… if the language provisions it. Many languages nowadays do. Here are some of the more mainstream ones:

- Java (OOP champion) since version 8 has offered FP

- Python has some standard FP functions and treats functions as first-class citizens and optionally offers functions which further enable FP.

- Javascript

- Scala (more OOP than Java) was designed from the very beginning as an FP language.

Let’s demonstrate how we can have state (a staple of Object-Oriented Programming) but still eschew mutability (a staple of imperative programming). Consider a reservation repository that will generate and assign a reservation number to each new reservation. The reservation number can be generated in a number of ways but, for the sake of simplicity, our reservation number will be an integer and incremented by 1 from the last reservation number. This will, no doubt, elicit the idea of mutability in a large subset of the readership since the act of adding a reservation to a repository would change the state of said repository:

val reservations = ReservationRepo.empty

reservations.add(newReservation)

assert(reservations.nonEmpty)This is because add() actually mutates reservations by modifying the actual object to now have a new reservation:

class ReservationRepo:

private val reservations = ArrayBuffer.empty[Reservation]

def add(reservation: Reservation): Unit =

reservations.addOne(reservation)This mutation of state, reservations, is done through addOne().

Is there a way to effectively get the same functionality yet not mutate? Yes. Currently, by adding() returns Unit. But, what if we modify it so that it actually returns a new ReservationRepo with the new desired state:

class ReservationRepo private (reservations: Vector[Reservation]):

def added(reservation: Reservation): ReservationRepo =

ReservationRepo(reservations :+ reservation)So, when adding a reservation, instead of mutating the internal data structure, one simply instantiates a new abstract data type with the new data structure or state. Now, this can be said:

val reservations = ReversationRepo.empty

val withNewReservation = reservations.added(newReservation)

assert(reservations.isEmpty)

assert(withNewReservation.size == 1)

assert(withNewReservation.contains(newReservation))Here we see that reservations continues with its original state even after adding a new reservation. This allows us to reap the parallel-safe benefits of FP and still accomplish our goals of getting a reservation repository reflecting the new state.

Conclusion: Functional Programming vs OOP

Both OOP and FP have their benefits. They are for the most part different. For various reasons, OOP and FP are often pitted against each other. However, as has been demonstrated, this is not necessarily the most useful approach, as they can be used in the same codebase and can work very well together with their benefits neither nullified or diminished.

Another perspective on Functional Programming vs OOP

Interview with Francois Armand

To show the comparison from the other side, we asked Francois, a DevOps and CTO at rudder.io, specialising in FP and JVM ecosystem. He was also interviewed in the Why developers choose ZIO article where you can find a lot of statements according to working and implementing ZIO. Now, let’s jump on to the Functional Programming vs OOP interview with Francois.

What do you think object-oriented programming could learn from functional programming?

Immutability, referential transparency, principles. And actual composition and decomposition of problems.

What do you think functional programming could learn from object-oriented programming?

Module (as in code organisation, not runtime organisation), and dot-notation. That’s about all. It seems to me that the more time passes, the less there is behind “OOP”. The original vision is now better implemented by runtime engines and actor framework. And for the other part of OOP… It feels like mostly cargo culting.

Late binding? After all this time, I’m more and more sure that late binding is a bad idea. The promise of runtime dependency injection always misses the semantics associated with it in complicated cases (concurrency, asynchronicity, etc) and I think a model based on effect management à la ZIO is really much more akin to providing what we really want: runtime extensible behavior of an app in a principled way.

The notion of “smart objects” binding together data and computation on it has proven to be a bad idea in most cases.

The last bastion where it seems to make sense is in UI, where there are a lot of local states (ie, not data processing). But even here, functional models bring a nice theory on top of cargo-culting. For example, ELM is a really pleasing model to use for UI. But the theory is complicated.

Even JVM is going to dumb data structure with Valhalla.

The last bit is variance, and this one is actually interesting. ZIO opens a new idea of “what Functional Programming is with objects parts” and shows what you can get in terms of type inference, boiler-plate-lessness and raw power with this hybrid model. It’s extremely interesting.

How do you think functional programming can help us to solve problems related to concurrency that occur in the object-oriented world?

I’m not sure what specific concurrency problems that only occur in the OO world are. Pure FP runtimes are better suited for this by construction, be it Haskell or ZIO. But the real gain here is principled effect management. – just to be sure what you mean by FP.

For example: Scala by itself, even if presented as a functional programming language, does not help much on concurrency.

Scala with Akka helps a lot, but Akka is not really presented as an FP framework, it’s more the quintessence of OOP.

Scala with ZIO helps a lot, and is a good example of what an FP runtime can bring to these subjects.

What do you think about the performance of applications written using functional style?

Very good. Although it depends on what problem you are handling.

I mean, in 90% of apps, the optimisation that matters is “finding a better model for the computations we need to do, either in the flow of computation, their location, or the data layout we use”. So what matters is the capacity of deconstructing problems, composing solutions, reorganizing things. When it comes to all that, FP helps A LOT.

But for cases where you need to go as fast as possible on a given task, the problem is at the runtime level. Today, FP gives choices on RAM/throughout/CPU that you can’t easily optimize by hand. So in these cases, a language such as Rust is better suited.

Do you think developers should learn functional programming in Scala? Why?

Scala was a complicated language that put A LOT on the plate, with a very steep learning curve. I think it’s much, much easier to learn FP with a simpler language like ELM (I’m used to recommending OCaml or F#, Haskell being full of incidental complexity for its own sake).

Elm is very good for learning: pure FP, no higher level type which trades repeating yourself for self-clarity of code (good when you are learning), a direct use case with an easy visualization, and a helpful compiler that tells you what you did wrong, and lots of good IDE.

Scala 3 may be simpler, but its style is waaaayyyy behind ELM for “first FP lang”. It’s too easy to get into bad habits (with JVM under the hood, the whole runtime model is extremely complicated) and it is not pure with a mix of FP with other paradigms

What do you think are the business benefits for a company that decides to use Scala, applying the functional style?

From my own experience, running a company which chose Scala in 2010 brings more and more FP purity into our app: it allows a much much bigger ratio of “maintainability of the app / and number of devs. We built our software (a complex devops tool with hundreds of thousands of Scala code) with 3 devs for the whole backend, and after all that time, we are still able to massively refactor it today.

And the more we add ZIO into it, the easier it is to change things without side effects (sorry ;) )

What do you think about Scala 3? Are you already using it or planning to do so?

We don’t use it, because the cost of migration compared to the gain is ridiculously low for us.Nobody on the team knows Scala 3, it is almost a totally new language, and in all cases, our business model forces us to maintain the old version of our software in Scala 2, already deployed for years.

We will still do it though, and we really hope the migration story won’t be long :)

If we were starting a green app today, the choice would be a no-brainer :Scala 3 is an overwhelmingly positive change in the language.

What do you think about effect libraries such as ZIO, Cats effect, Monix? Do you use any of them and why?

ZIO. A lot. In my opinion, it’s Scala finding its own personal flavour of FP, embracing the strengths of the language to make it a unique, wonderful valuable proposal. ZIO 2 confirms that feeling, with exceptional new pure FP features, unique to ZIO, such as scoped managed resources (https://twitter.com/jdegoes/status/1503308652148670464…)

Is Functional Programming vs. OOP a valid comparison?

It is, since people do it a lot. This means that people want to know what the differences are, and what we mean when we use one term or another. It helps to understand what meaning people put behind each of these terms, from the trivial “programming with (pure, by def) function” to “higher kinded types with effect management and the associated runtime”, from “encapsulating data and behavior in objects” to variance, late binding, modules, actors, etc.

Are FP and OOP mutually exclusive?

What a strange question? We have had 30 years of common existence in software, how can they be? We can’t really annihilate the past :)

And if I guess the real question is: “Can you do ‘real’ OOP if you want to also do FP, and the opposite?”, it really looks like an overgeneralization. It really depends on the flavor of the language and style you choose. Mixing Haskell with OOP? This could be a bad idea, involving a lot of swimming against the flow. In ZIO ? Hell yes, it’s designed for that.

More on ZIO

- ZIO Test: What, Why, and How?

- ZIO SQL: Type-safe SQL for ZIO applications

- Introduction to Programming with ZIO Functional Effects

Read also

- How to learn ZIO? (And Functional Programming)

- Scaling Up From 10 To 40 Developers In Less Than A Year

- OpenTelemetry from a bird’s eye view: a few noteworthy parts of the project

- The OpenTelemetry + Mesmer duo: state of the Mesmer project

- Is Scala The Language Of 2023? 10 Developers Share Their Scala Projects Experiences